My take on Shopify's AI-first engineering principles

What Shopify got right, what's missing, and what I'd recommend

Hey, welcome!

If you’re new here, I’m Zohar, CEO of Port. This newsletter is where I share what I’m seeing in engineering, AI, and how companies are actually running AI in their SDLC.

Bessemer published a deep dive into how Shopify runs AI across 3,000+ engineers, and it’s one of the more detailed playbooks any company has shared publicly. So I went through it, pulled out the sections that matter most, and wrote my reaction to each + a practical recommendation.

The problem I’m seeing most in the industry is agent sprawl. It looks like Shopify has avoided it.

Here’s how it plays out the bad way: Dev teams each pick their own AI tools, wire up their own integrations, and build their own agents. Suddenly you have thousands of siloed agents and a lot of them do the same thing. You don’t have visibility into what exists across the org, what actions agents can take, or what data they can access. It gets messy. Fast.

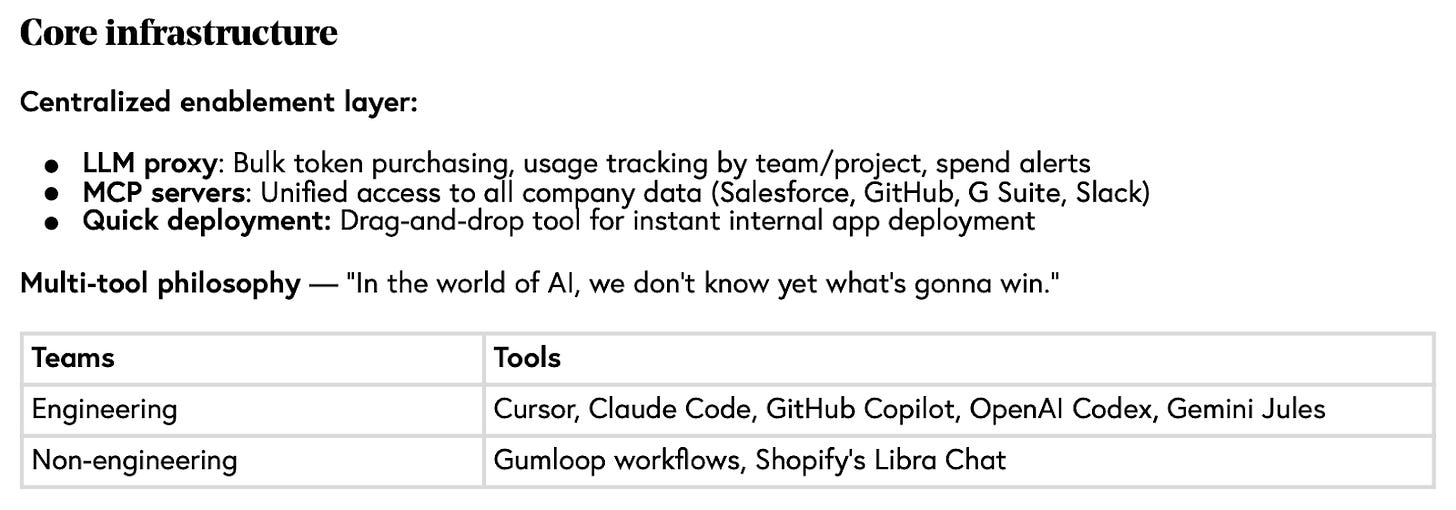

Shopify avoided this by building the infrastructure first:

An LLM proxy routing everything through a single gateway.

Standardized MCP servers connecting to company data.

Usage tracking by team + Alerts at $250/day.

Once they had the right infrastructure in place they were able to tell engineers: pick whatever tool you want on top. That’s only possible because the layer underneath is centralized. Everyone else just plugs in without worrying about getting things wrong.

Most companies do the opposite. They start with the tool. Then they might negotiate an enterprise license for Cursor or Copilot, roll it out, and hope for the best. No centralized tracking, no shared integration layer, no visibility into what’s happening across teams. When they need to switch tools or add a new one, they’re starting from scratch every time.

I think of it like going shopping. The platform team sets up the store: the data, the actions, the workflow engine. Then developers walk in and add things to their cart to build what they need. They don’t wait or file a request. They pick what they need and build an agentic workflow. But this only works if the infrastructure is already there. If it’s not, developers run ahead anyway, each team builds their own thing, and the platform team is stuck catching up.

My recommendation:

Before you evaluate another AI tool, answer three questions.

Do you have a centralized way to track which tools your teams are using?

Can you see token spend by team and project?

Do your agents connect to company data through a shared layer or through one-off integrations each team wired up?

If you can’t answer all three, start there and build infrastructure first.

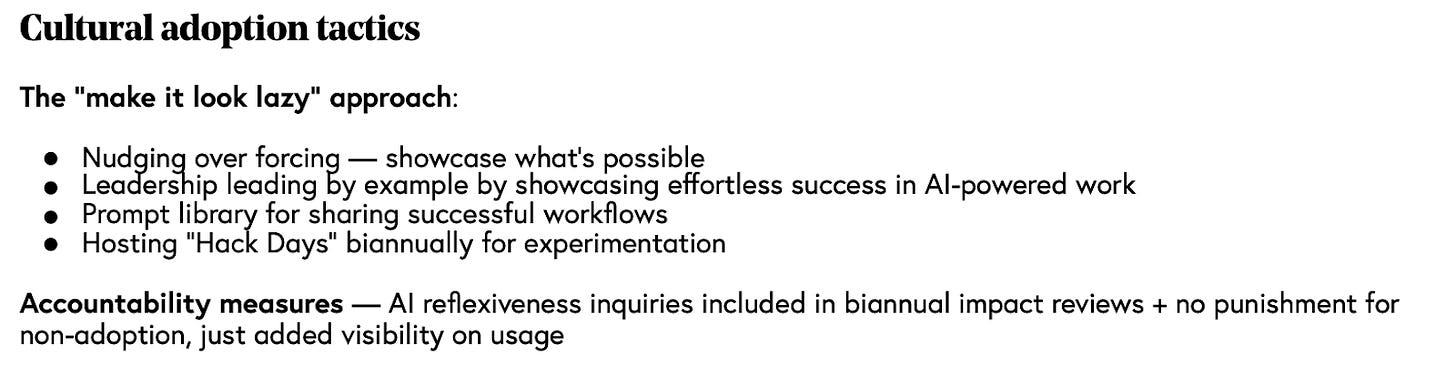

Top-down mandates don’t work for AI adoption and Shopify identified that too.

They tried the opposite: make leadership look effortless using AI, and let that pull people in. Weekly demos instead of KPIs because, as Farhan says, otherwise engineers would just game a metric.

Another clever strategy they are using is a prompt/skill library. When someone figures out a workflow they can share it with others.

Ultimately, you can’t chase developers into adopting something and this is especially true in the AI era. The best platform teams I talk to aren’t building agents themselves. They’re building the infrastructure so their developers can build agents safely. They clear the path: pre-built integrations, a workflow engine, guardrails already in place. Then they get out of the way. The platform team becomes a facilitator, not a gatekeeper.

Tobi’s memo pushed it even further: prove AI can’t do the job before requesting headcount. You can read the memo here:

My recommendation

Pick one workflow your platform team automated recently. Demo it at your next engineering all-hands. Don’t present it as a mandate. Just show the before and after. Then share the prompt or config so anyone can replicate it. One good demo does more for adoption than a month of rollout plans.

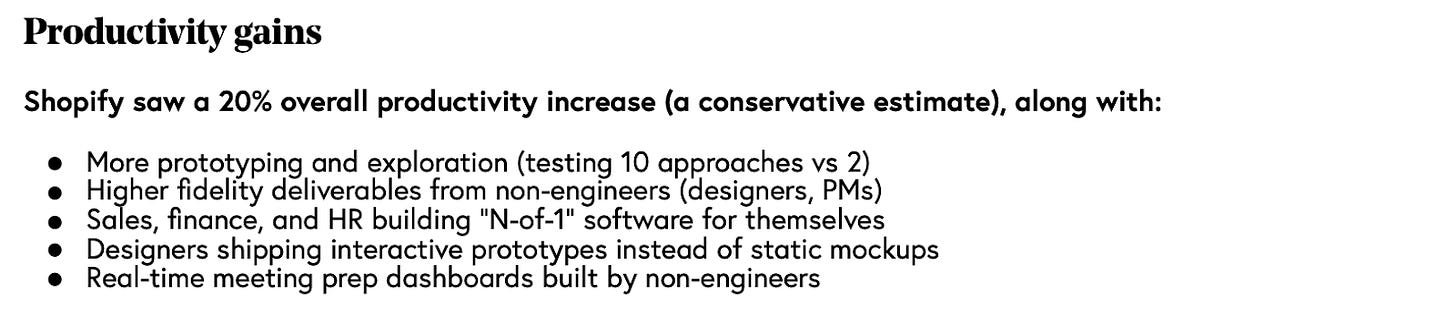

The 20% productivity number seems like the story here but it’s not. The real story is that Farhan and Shopify are focused on solutions, not more code. Customers don’t care how much code you (or AI) writes. All they care about is if you solved their problem or not.

That’s what AI is enabling: testing 10 approaches instead of 2. Shopify is telling you the unit of productivity has changed. It used to be lines of code shipped. Now it’s problems solved. And when code is cheap, you explore more broadly, which means many, many more things running in your environment, more services, more agents, more surface area to manage.

My recommendation:

Ask yourself if you are tracking vanity metrics in AI or metrics that affect the business or engineering.

Lines of code, tokens used, AI tool adoption are all vanity metrics

MTTR, Cloud cost savings, ARR are real metrics

If you’re not tracking the real ones, I suggest starting now but make sure you can connect AI initiatives to those business results. I wrote more about this here.

What Shopify is admitting here is something most companies won’t say out loud: even with a dedicated infrastructure team and unlimited budget, they got the tool-to-persona mapping wrong and had no visibility into spend until it was already a problem. Cursor for non-engineers was the wrong UX at the wrong price. Individual engineers hit tens of thousands in tokens per week before anyone noticed. They bolted on alerts at $250/day after the fact. The message is clear: if you don’t have centralized tracking from day one, you will discover your AI costs the hard way.

I talk to enterprise architects who have no idea what they’re spending on AI tokens across the org. No visibility, no throttling, no control. And these are the proactive ones, the companies trying to get governance in place before the chaos starts. Shopify had every advantage and still needed to course-correct. Most companies are running this experiment with no safety net at all.

My recommendation

Run a quick survey this week: which AI tools are your teams using, and how are they paying for them? If you get inconsistent answers or blank stares, you already have the problem Shopify had. Set up usage tracking before you set up anything else.

Farhan focuses on PR reviews as the bottleneck here. Actually, I think the problem is bigger than that. The number of things engineering needs to review or approve is exploding. Agents now write code, deploy to prod, roll back versions, remediate incidents.

If you put a human in the loop at every stage, you exhaust your engineers and fail to adopt AI at all.

The way I think about it: humans shouldn’t be in the loop. They should be out of it.

When should they be included? Well, it depends on the risk. A PR comment? Nothing bad can happen. Let the agent run, review later. An auto-remediation that restarts a service? Higher risk, but if it’s a governed action with guardrails already in place, let the agent do it and notify the team after. A prod deployment to a tier-1 service? That’s a blocking review. A human should own that decision.

The tricky part is that risk is dynamic. Deploying during a workday is very different from deploying at 2am. Same operation, different context. You can’t hardcode the rules. You need to trust AI to execute and report back, trace every decision, and measure quality over time. You’ll see where it’s reliable and where it still needs a human.

My recommendation

Map every point where a human currently blocks an agent workflow. For each one, ask: what’s the actual risk if the agent does this without approval? Start pulling humans out of the low-risk loops (PR comments, test runs, doc generation). Keep a rich audit trail so you can trace every agent decision. Build trust before moving to higher-risk operations.

Farhan says “if you don’t figure this out in 2026, you’ll be behind”.

I agree completely. Except I think it’s coming sooner.

And then what happens when an agent works well? The article is oddly silent about this.

How do you take a great agent from demo to production?

How do they work together?

Which data can they access?

Do they need humans in the loop?

The questions go on…

The platform layer is described well. The agent lifecycle is missing.

I’d be interested to learn more about how Shopify will handle this.

My recommendation

Find a recent agent someone in your company built. (Most likely, it’s still a demo or local to the team.) Then try writing a list of all the things the agent would require if you had to roll it out to the entire engineering team. You can borrow some things from my list here.

—

Shopify built all of this from scratch with 3,000 engineers and a near-unlimited budget. The pattern they arrived at, having a centralized platform underneath with tools on top, is what every engineering org will need. When that platform exists, developers start building their own agents on top of it and you stay in control.